AI Authenticity

& Validation

VSI evaluates whether a company's AI claims are real, substantive, and defensible. We determine technical reality — not marketing narrative.

The question every investor must ask —

"Is this real?"

Five Evaluation Dimensions

API usage vs internal model. External vs controlled inference. Dependency risk quantification.

Wrapper, orchestrator, platform, or infrastructure classification against VSI taxonomy.

Marketing claims assessed against real technical capability. Discrepancy identification.

Real-world testing of system behaviour, performance, and consistency.

Dependency risk, data exposure, misleading positioning, and sovereignty classification.

VSI AI Classification

Every company assessed through VSI is classified into one of six categories, creating a standardised language for AI credibility.

Abstract Wrapper

Thin API wrapper with low technical moat and high dependency risk.

AI-Enabled Product

AI is a feature within a broader product. Limited technical moat.

AI-Orchestrated Platform

Multiple AI models coordinated through a platform architecture.

Fine-Tuned Model System

Proprietary fine-tuning on foundation models with domain-specific data.

Controlled AI Infrastructure

Owns and controls the inference and deployment infrastructure.

Sovereign AI Environment

Fully private, sovereign AI system with independent model control.

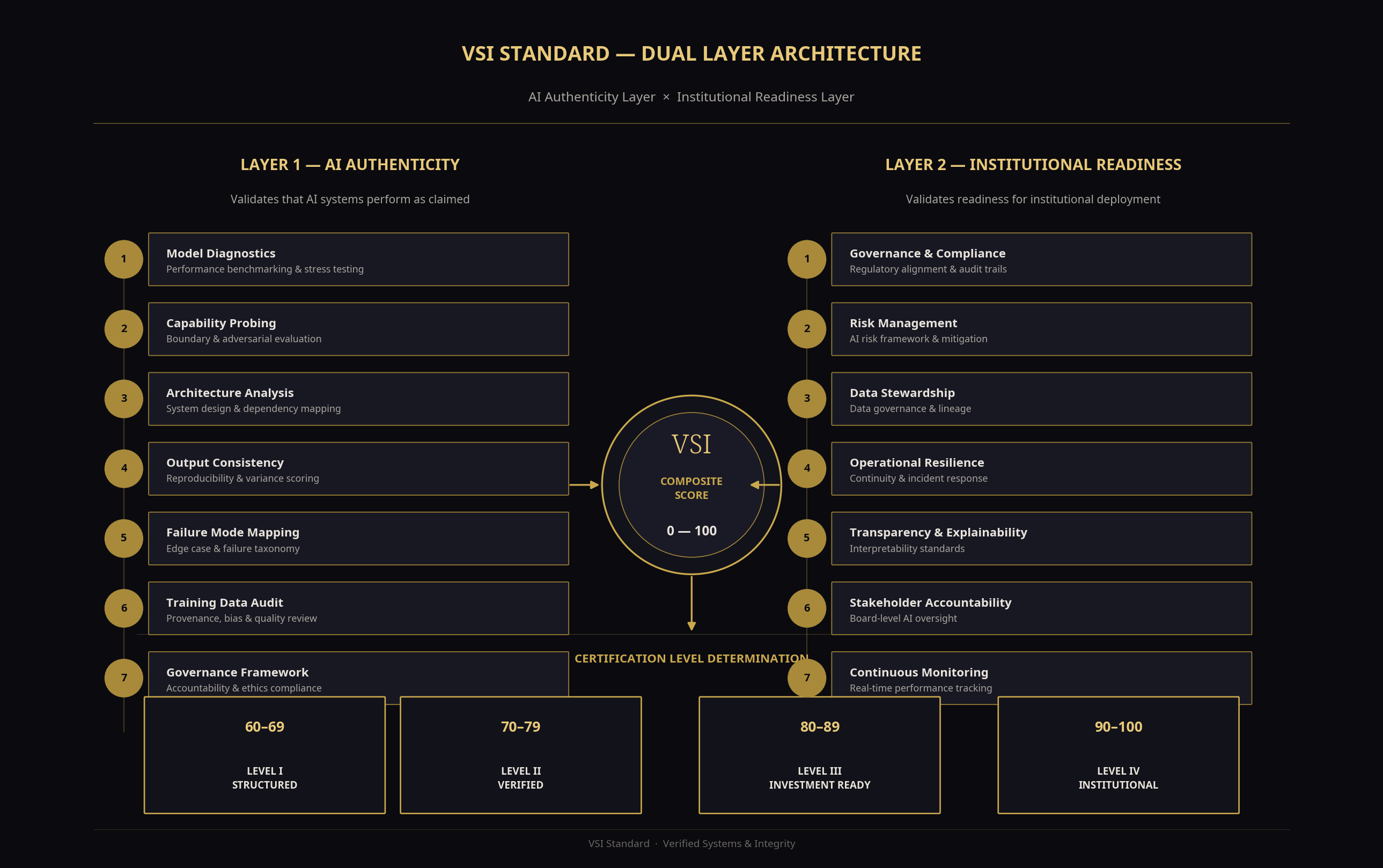

AI Authenticity Score

A composite score from 0–100, weighted across four critical dimensions of technical reality.